OpenAI’s new ChatGPT Health product and fresh announcements from Google and Anthropic are arriving as U.S. regulators loosen oversight for some software tools used in healthcare. The timing is putting new attention on how quickly medical AI is moving—and how safety, privacy, and responsibility will be handled as these tools reach more people and clinics.

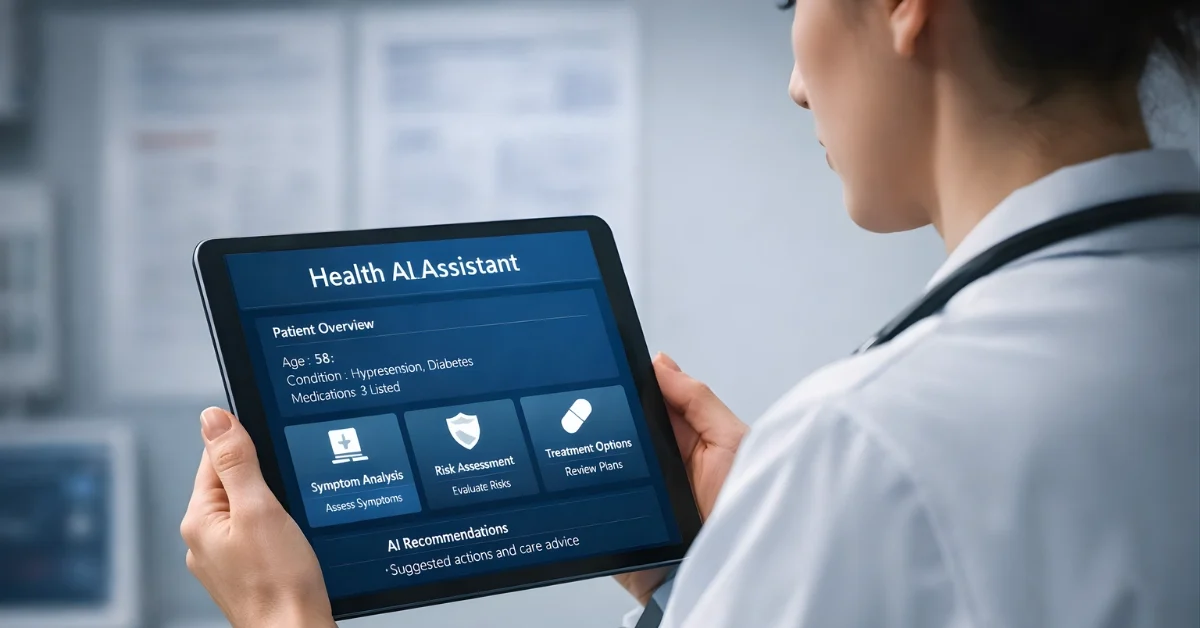

ChatGPT Health is designed as a separate space inside ChatGPT for health and wellness conversations, and OpenAI says it can connect to certain medical records and wellness apps to make responses more relevant. At the same time, the Food and Drug Administration has released updated guidance that changes how some clinical decision support software is treated, potentially allowing more tools to be used without FDA review.

A wave of new medical AI tools

OpenAI, Google, and Anthropic announced specialized medical AI capabilities within days of each other this month, a cluster that one outlet described as a sign of competitive pressure rather than coincidence. That same report also noted that none of these newly announced tools are cleared as medical devices, approved for clinical use, or available for direct patient diagnosis.

Google’s announcement included MedGemma 1.5, which the company said expands an open medical AI model to interpret 3D CT and MRI scans, along with whole-slide histopathology images. Anthropic announced Claude for Healthcare, described as offering HIPAA-compliant connectors to CMS coverage databases, ICD-10 coding systems, and the National Provider Identifier Registry.

The same report said the companies are targeting workflow problems such as prior authorization reviews, claims processing, and clinical documentation, while emphasizing privacy protections and disclaimers. It also stated that OpenAI positions ChatGPT Health as supporting—not replacing—clinical judgment, and that OpenAI says Health is not intended for diagnosis or treatment.

What ChatGPT Health is offering

OpenAI describes ChatGPT Health as a dedicated experience intended to bring a user’s health information together with ChatGPT to help people feel more informed and prepared when navigating health and wellness. OpenAI also says health is one of the most common uses of ChatGPT, and that—based on de-identified analysis—over 230 million people globally ask health and wellness questions on ChatGPT every week.

ChatGPT Health is built as a separate space within ChatGPT, and OpenAI says it uses added protections for sensitive health information, including purpose-built encryption and isolation to keep health conversations compartmentalized. OpenAI says conversations in Health are not used to train its foundation models.

OpenAI says users can securely connect medical records and certain wellness apps, including Apple Health, Function, and MyFitnessPal, and that medical record integrations and some apps are available in the U.S. only. OpenAI also says it partners with b.well to enable access to trusted U.S. healthcare providers’ data, and that users can remove access to medical records.

OpenAI says access will start with a small group of early users via a waitlist, and it plans to expand availability on web and iOS in the coming weeks, with eligibility described as including users with certain plans outside the European Economic Area, Switzerland, and the United Kingdom. TechCrunch also reported that the feature is expected to roll out in the coming weeks.

New questions about safety and accuracy

TechCrunch highlighted a core risk of using large language models for medical advice: these systems generate responses by predicting likely text, not by checking what is true, and they can hallucinate. TechCrunch also noted OpenAI’s terms of service stating ChatGPT is “not intended for use in the diagnosis or treatment of any health condition.”

In its own announcement, OpenAI similarly says ChatGPT Health is designed to support—not replace—medical care, and is not intended for diagnosis or treatment. OpenAI also says it developed ChatGPT Health in close collaboration with physicians and created an evaluation framework called HealthBench, which it describes as using physician-written rubrics to judge quality in practice.

Separately, one report cautioned that strong performance on medical benchmarks is not the same as clinical validation in real-world settings. That report added that errors in medicine can have life-threatening consequences, making the jump from benchmark results to practical clinical utility more complicated than in many other AI use cases.

FDA guidance shifts the landscape

STAT News reported that on Jan. 6 the FDA released updated guidance for clinical decision support tools, and that the changes relax key medical device requirements. STAT News also argued that, under the new approach, many generative AI tools that offer diagnostic suggestions or handle supportive tasks like medical history-taking could reach clinics without FDA vetting.

MedTech Dive reported that the FDA released two final guidance documents loosening regulations for certain wellness and software products, and that FDA Commissioner Marty Makary announced the changes at the Consumer Electronics Showcase. MedTech Dive also reported Makary said the changes would “promote more innovation with AI in medical devices.”

On wearables, MedTech Dive reported the FDA’s guidance gives broader leeway to devices that provide readings around heart rate, blood pressure, and blood glucose, as long as they are intended solely for wellness purposes. MedTech Dive also described examples in the guidance, including a wrist-worn wearable that tracks sleep, pulse rate, and blood pressure, and it noted the FDA’s guidance appears to contradict a previous warning letter the agency sent to wearable company Whoop.

On software, MedTech Dive reported the FDA made significant changes to how it regulates clinical decision support tools, including describing that software providing a sole medical recommendation can now be exempt from regulation, where it previously would have been considered a medical device. MedTech Dive also reported the guidance includes examples involving software that predicts cardiovascular risk, and software that summarizes radiologists’ findings, while noting that direct image analysis to create a report would still be regulated.

Where companies say adoption is heading

One report said real-world deployments are being scoped carefully, with institutional adoption concentrating on administrative workflows like billing, documentation, and drafting protocols, rather than direct clinical decision support. That report also described examples including Novo Nordisk using Claude for document and content automation focused on regulatory submission documents, and Taiwan’s National Health Insurance Administration applying MedGemma to extract data from 30,000 pathology reports for policy analysis.

STAT News pointed to separate developments that it said, together with the FDA guidance, shift the healthcare AI landscape, including Utah starting a pilot with Doctronic for autonomous AI prescription refills and OpenAI debuting ChatGPT Health. STAT News also said these developments could create cautious optimism around access and navigation challenges in U.S. healthcare, while increasing the need for safety research.