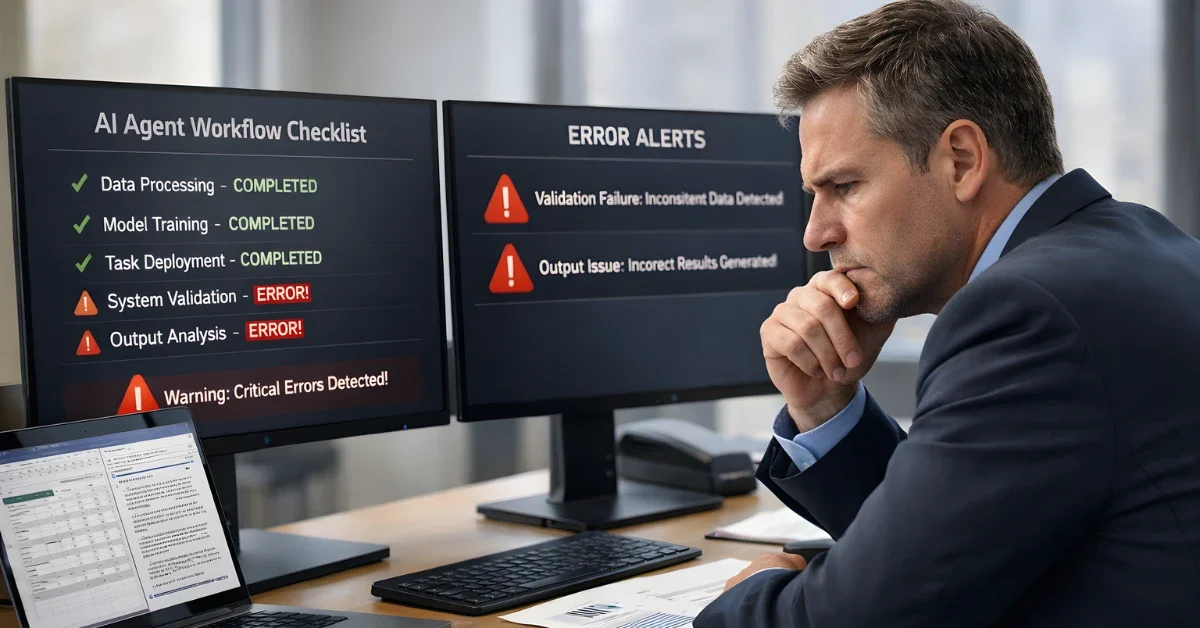

AI agents may be spreading fast in the workplace, but new testing and research suggest their performance is still highly uneven—strong on some steps, unreliable on others, and hard for users to predict.

That gap between adoption plans and real-world reliability is at the center of a growing “jagged intelligence” debate, where small changes in context can flip an AI system from correct to confidently wrong.

Benchmark results show steep failure rates

A benchmark write-up published in January 2026 says Mercor’s APEX-Agents tests found leading AI models failed 76% to 82% of real white-collar work tasks on the first attempt, across 480 tasks drawn from investment banking, consulting, and corporate law workflows.

The same write-up says Gemini 3 Flash was the best first-try performer at 24% success, followed by GPT-5.2 at 23%, while Claude Opus 4.5 and Gemini 3 Pro scored 18.4%.

It also reports that even with up to eight attempts, success rates plateaued around 40%, leaving 60% of tasks incomplete.

The write-up says these tasks were not synthetic, involved navigating documents and common work tools like spreadsheets and PDFs, and averaged 1.8 hours of expert-estimated human effort.

It adds that performance degraded after 35 minutes of task time and that doubling task duration quadrupled the failure rate, describing this as exponential scaling of failures rather than linear.

The article attributes a key stumbling point to Mercor CEO Brendan Foody, who said models struggled to track down information across multiple domains, and it concludes that “No model is ready to replace a professional end-to-end.”

What “artificial jagged intelligence” means

In a January 2026 paper, economist Joshua S. Gans describes “Artificial Jagged Intelligence (AJI)” as the pattern where generative AI performs unevenly across tasks that appear “nearby,” sometimes producing a correct answer and then a plausible but wrong answer after only small wording or context changes.

Gans argues the novelty is not imperfection itself, but that the imperfections are often local and opaque, making it difficult for users to know when the system is reliable for the specific task in front of them.

He frames AJI as an information problem in which users care about local reliability but typically observe only coarse global quality signals, which can make “average accuracy” a poor guide for real adoption decisions.

Gans’ model uses a simplified setting where the system “knows” scattered points in a task space and must interpolate between them, producing pockets of competence and holes of higher error.

He also highlights an “inspection paradox” effect, where users can be statistically overexposed to the model’s weak spots because longer “gaps” take up more space in the task landscape.

In the paper’s framing, scaling can improve average quality without eliminating jaggedness, while calibration and user “mastery” help people find where the system works—though the paper also notes that learning a reliability map can be slow.

Adoption push meets deployment friction

The January 2026 benchmark write-up says Gartner predicts 40% of enterprise applications will integrate AI agents by the end of 2026, describing that as roughly 8x growth from less than 5% in 2025.

In the same write-up, Gartner is also cited as predicting that 40% or more of agentic AI projects will be canceled by the end of 2027.

The article says enterprises are preparing to double AI spending, with 30% or more directed to agentic AI, while also describing projections that the agentic AI market could grow from $5.2 billion in 2024 to $200 billion by 2034.

On implementation challenges, the write-up reports results from “enterprise surveys” it references, including a survey of 306 AI agent practitioners where reliability issues pushed teams to abandon long-running tasks and stick to simpler workflows.

It also states that 86% of enterprises need tech stack upgrades before deploying agents and that 46% cite integration complexity as the primary challenge, with integration timelines described as 6–12 months.

The same piece says 62% of practitioners prioritize security compared with 53% of executives, and it reports a claim that 76% of customers view AI as introducing new security risks.

NeurIPS 2025 spotlight on “jagged” behavior

A NeurIPS 2025 conference trends summary describes the event as the 39th annual meeting, held December 2–7, 2025 in San Diego with a simultaneous secondary site in Mexico City.

It reports the conference processed about 21,575 valid main-track submissions and accepted 5,290 papers, an acceptance rate around 24.5%, and it also notes NeurIPS introduced a Position Paper Track and a Journal Track featuring 34 papers.

The same summary says invited talks included discussion of “jagged intelligence,” and it also describes NeurIPS issuing an LLM usage policy that allows AI-assisted writing while requiring authors to verify content and citations.