Apple has opened its foundational AI model to third-party developers, giving app makers direct access to the on-device large language model behind Apple Intelligence in a move that puts Apple AI developers at the center of the company’s latest WWDC strategy. The announcement marks a notable shift for Apple, which has long kept tight control over its software ecosystem and is now using developer access as a key part of its AI push.

At the same time, Apple’s broader rollout remained cautious. The company focused its WWDC presentations on practical additions such as live translation, call screening, and expanded visual intelligence, while avoiding the kind of sweeping AI claims that have defined rival launches from companies such as OpenAI and Google.

Developer Access Expands

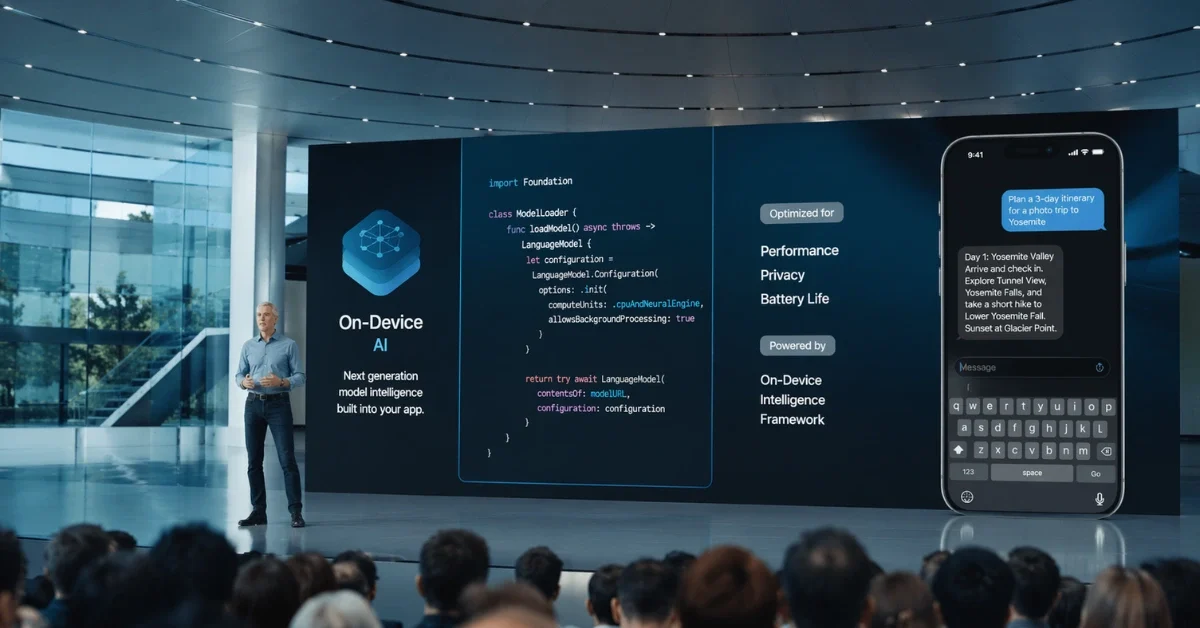

Apple said developers can tap directly into its on-device model through a new Foundation Models framework. According to reports from the conference, the model has about 3 billion parameters, runs entirely on the device, and is designed around Apple’s privacy-first approach, though that also means it is less capable than larger cloud-based systems for more complex tasks.

The framework is meant to make adoption easier for developers. One report said developers can integrate Apple Intelligence features with as little as three lines of Swift code, and the framework includes guided generation and tool-calling capabilities. Apple also said developers will have access only to the on-device version of Apple Intelligence, not the cloud systems tied to Apple’s custom AI data centers.

Apple paired that move with deeper AI support inside Xcode. Reports said Xcode 26 can offer built-in ChatGPT access without requiring an account, support API keys from other providers, and run local models on Apple silicon Macs, while Apple also plans to offer both its own and OpenAI’s code-completion tools in developer software.

Consumer Features Stay Practical

For users, Apple emphasized features aimed at everyday convenience rather than a dramatic reinvention of the iPhone experience. The company introduced Call Screening, which can answer calls from unknown numbers, ask callers why they are calling, and show a transcription before the phone rings for the user. Apple also said live translation is coming to phone calls and that developers will be able to bring the same translation technology into their own apps.

Apple’s Visual Intelligence tools are also expanding. The feature can now move beyond camera-based lookups and analyze content on an iPhone screen, with Apple giving the example of spotting a jacket online and then finding a similar item for sale through an app already installed on the device. Third-party integration is part of that effort as well, with one report saying Apple extended Visual Intelligence capabilities to outside developers through enhanced App Intents.

A Measured Strategy

That steady tone stood out because Apple’s AI messaging has faced heavy scrutiny since earlier promises around Siri and Apple Intelligence failed to fully materialize. Analysts quoted in coverage of the event described this year’s announcements as incremental, and Apple shares closed 1.2 percent lower after the conference. Others said Apple now appears more focused on delivering working tools than on repeating ambitious visions that are not ready to ship.

Earlier reporting had signaled this direction. In May 2025, Reuters, citing Bloomberg, reported that Apple was preparing software development kits and related frameworks so outside developers could build AI features with Apple’s models, beginning with smaller on-device systems rather than more advanced cloud models. A later report also said Apple was considering replacing Core ML with a rebranded Core AI framework as part of a broader overhaul tied to iOS 27, iPadOS 27, and macOS 27.

Broader AI Reset

Separate reports have linked that wider AI reset to a Siri overhaul. Republic World, citing Bloomberg’s Mark Gurman, reported in March 2026 that Apple was testing a standalone Siri app and a new “Ask Siri” feature across its software platforms, while Built In reported that Apple has been leaning on partnerships with OpenAI, Anthropic and Google as it works on its own foundation models and a larger Siri upgrade. Together, those reports suggest Apple is trying to strengthen its developer tools, improve practical AI features and rebuild confidence without promising more than it can deliver right away.