A newly disclosed “Reprompt” technique showed how attackers could use a single click on a crafted Microsoft Copilot link to take over a victim’s Copilot session and quietly pull out sensitive information. Security researchers say the attack worked by hiding malicious instructions inside a legitimate-looking URL, tricking Copilot into running those instructions automatically.

The researchers behind the finding, Varonis, said the issue affected Copilot Personal and that Microsoft confirmed it has been patched, while enterprise customers using Microsoft 365 Copilot were not impacted. BleepingComputer reported that Varonis disclosed the issue to Microsoft on August 31 last year and that a fix was issued on January 14, 2026.

How the “Reprompt” attack worked

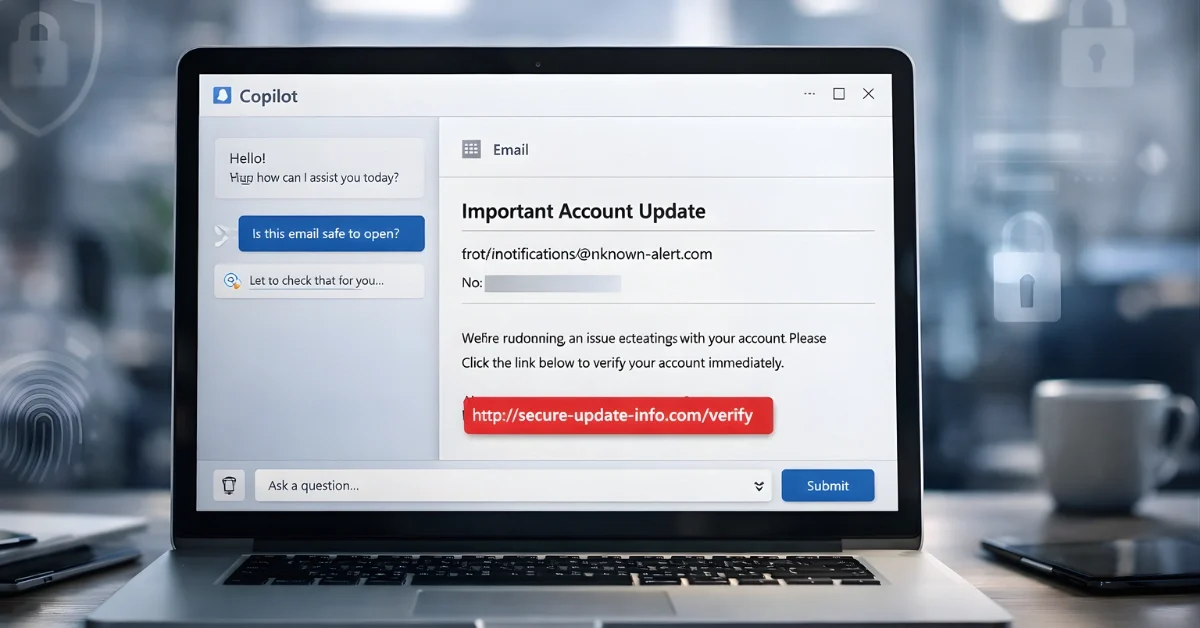

Varonis explained that Reprompt could compromise victims with just one click on a legitimate Microsoft link, without requiring plugins or additional interaction with Copilot. BleepingComputer similarly reported that the method could keep access to a victim’s large language model session after a single link click, enabling “invisible” data exfiltration.

A key part of the attack is that Copilot can accept prompts through the “q” parameter in a URL and execute them automatically when the page loads. Researchers said an attacker could embed instructions in that URL parameter, send the link to a target, and then cause Copilot to perform actions on the user’s behalf without the user realizing it.

Three techniques used to bypass protections

BleepingComputer and Varonis described Reprompt as a chain built from three techniques used together to bypass Copilot protections and keep data flowing out. The first technique, described as “Parameter-to-Prompt” or “Parameter 2 Prompt” injection, relies on using the “q” URL parameter to inject instructions that can trigger sensitive actions, including pulling conversation memory.

The second method is a “double-request” approach, which the researchers said took advantage of an observed behavior where Copilot’s data-leak safeguards applied only to the first web request. Varonis showed an example prompt that asks Copilot to make every function call twice and display only the best result, and reported that the second attempt could reveal data that the first attempt withheld.

The third method is a “chain-request” technique, where Copilot continues receiving instructions from an attacker-controlled server in a back-and-forth sequence, using each response to generate the next request. Varonis said this can make the exfiltration continuous, hidden, and dynamic, because the most revealing instructions arrive later from the attacker’s server rather than being visible in the initial prompt.

Why detection was difficult

Varonis said Reprompt could bypass enterprise security controls and siphon data “without detection,” in part because client-side monitoring tools can’t easily determine what data is being exfiltrated by inspecting only the starting prompt. BleepingComputer quoted Varonis warning that the “real instructions” are hidden in follow-up requests delivered from the server after the initial prompt, making it hard to understand what Copilot is being told to do based on the original link alone.

BleepingComputer also noted that Reprompt could leverage an existing authenticated Copilot session and remain active even after the Copilot tab was closed. Varonis similarly said the attacker could maintain control even when the chat is closed, allowing the victim’s session to be silently exfiltrated after that first click.

What users should do next

BleepingComputer reported that there was no sign of Reprompt exploitation in the wild and advised users to apply the latest Windows security update as soon as possible. The same report also included an update noting that the fix was not necessarily tied to Patch Tuesday and was handled separately.

Varonis advised users to be cautious with links that open AI tools or pre-fill prompts, to review any prompt that appears automatically, and to close and report a session if the AI tool behaves unexpectedly or starts requesting unusual personal details. The researchers framed Reprompt as a reminder that AI assistants can hold sensitive context and that this trust can be exploited, turning a chat assistant into a data-exfiltration channel with just one click.